Aerial versus Terrestrial Scenes

People are very skilled at recognizing scenes, able to comprehend the gist of a scene in extremely brief amounts of time. But humans are accustomed to viewing the world with a certain viewpoint, so what happens when that viewpoint is changed?

People usually see the world from a terrestrial viewpoint, limited to the information currently in their field of view at eye-level. With some exceptions (like when traveling in an airplane), aerial (bird's eye) views are rare and unusual, and have only been available to humans for around 150 years (Loschky, Ringer, Ellis & Hansen, 2014, in press). We have little experience recognizing aerial views, which makes them difficult to comprehend. However, past research has shown that experts in landscape views (such as geographers) are better at categorizing aerial views than non-experts, indicating that performance for scene categorization can be influenced by experience (Lloyd et al., 2002)

Due to our greater experience with terrestrial scenes, people are biased to make eye movements along horizontal, terrestrial scan paths, rather than vertically or in oblique directions. However, our visual scan patterns can change depending on whether we are looking at a terrestrial scene or an aerial scene. When people first view an image, they use ambient, global processing with long saccadic amplitudes but short saccadic durations, trying to take in more information and understand the gist of the scene. Over time, they transition to focal, localized processing with short saccadic amplitudes but longer fixation durations, starting to analyze details. When presented with aerial images, however, people gaze more broadly around the entire image and spend more time looking at each fixation location, showing a less pronounced transition between processing strategies and suggesting that aerial views are more difficult to comprehend (Pannasch, 2014).

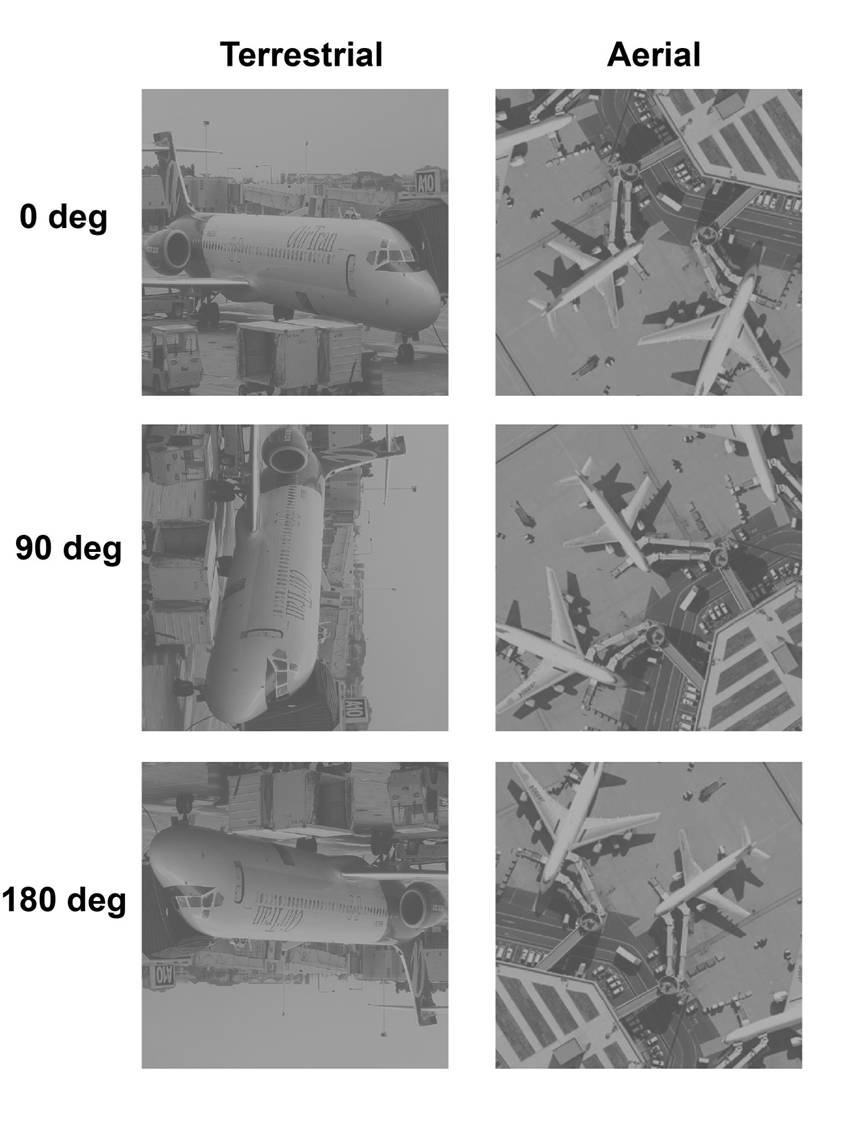

A possible explanation for the difficulty in recognizing aerial scenes is that aerial scenes lack important information that is present in terrestrial scenes – specifically, the perceptual upright (Loschky, Ringer, Ellis & Hansen, 2014, in press). For terrestrial scenes, the perceptual upright can be used to classify terrestrial scenes holistically. Since aerial scenes are missing the perceptual upright, this could explain why they are harder to recognize. On the other hand, since aerial scenes lack the perceptual upright, they are affected very little by rotation, while terrestrial scenes are significantly affected by rotation and become much more difficult to recognize. As the degree of rotation for a terrestrial scene increases, the harder it becomes to identify the scene – especially at oblique angles, such as 135°.

Using texture, however, could be a useful way to distinguish between aerial scenes. Aerial scenes contain more distinct, recognizable categories of textures than terrestrial scenes, which can make texture a useful cue – but only for longer processing times (330 ms vs. 36 ms), indicating that using texture to identify aerial scenes occurs later in processing and requires effort (Loschky, Ringer, Ellis & Hansen, 2014, in press). It is likely that texture is only one of many cues used to identify scenes. For example, past research has indicated that low-level, global characteristics of scenes play a common role in classification, whether the scenes are terrestrial, aerial, and even inverted or rotated (see picture above). This means that people may be able to identify a scene even when some specific details are lacking and only coarse statistical information is available. Our research suggests that representations of low-level information may even be apparent in MEG data (Ramkumar et al., 2012).

By continuing to research the differences between aerial and terrestrial scenes, we can learn more about how people process, recognize, and identify scenes, as well as how the layout of a scene itself can influence eye movements. This research also has implications for the fields of electrical engineering and computer science, and could be used to increase comprehension of satellite imagery.

Related Publications

Related Conference Presentations

Pannasch, S., Hansen, B. C., Larson, A. M. & Loschky, L. C. (2012, June). Further insights into ambient and focal modes: Evidence from the processing of aerial and terrestrial views. Invited Talk presented at the Fifth International Conference on Cognitive Science, Kaliningrad (former Königsberg), Russia.